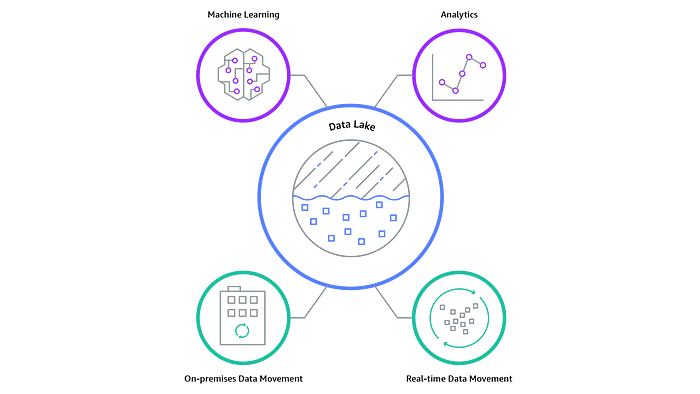

When I tackled my first data lake project it was difficult for me to understand what it actually is. However, I finally grasped the concept with the help of understanding that there are three types of data — structured, semi-structured, and unstructured. In layman’s term, it is a data management solution that stores and process raw data at scale, that doesn’t fit well into SQL databases(structured), data warehouses (structured) or NoSQL(unstructured) solutions. A highlight of difference to other data management solutions is that having the ability to economically store data allows for answering business questions in future that you otherwise may not have had data for.

Robust implementation of a data lake brings a wealth of benefits. Analytics extracted from new sources like log files, data from click-streams, social media, and IIOT can increase revenue by uncovering valuable insights, it allows to make better business decisions faster and bring budget savings. When data is all in one place, storage cost optimised (AWS delivers automatic storage cost savings when access patterns change), catalogued, and democratised(available to everyone) you can expect OpEx costs lowered as well. Moreover, benefits don’t stop there, cloud providers typically focus on security and governance features reducing risks and allowing them to meet the highest compliance standards.

Now that you understand what a data lake is and what the main benefits are, you can start preparing your business for robust data analytics. While there are many use cases for data stored in data lakes, I’m particularly excited about machine learning, which is closely related to AI.

In my next article, I will briefly overview data lake architecture on AWS.

As always, for any data platform needs, please contact us at info@dataphoenix.io — a small end-to-end data solutions provider.

| Cookie | Duration | Description |

|---|---|---|

| cookielawinfo-checkbox-analytics | 11 months | This cookie is set by GDPR Cookie Consent plugin. The cookie is used to store the user consent for the cookies in the category "Analytics". |

| cookielawinfo-checkbox-functional | 11 months | The cookie is set by GDPR cookie consent to record the user consent for the cookies in the category "Functional". |

| cookielawinfo-checkbox-necessary | 11 months | This cookie is set by GDPR Cookie Consent plugin. The cookies is used to store the user consent for the cookies in the category "Necessary". |

| cookielawinfo-checkbox-others | 11 months | This cookie is set by GDPR Cookie Consent plugin. The cookie is used to store the user consent for the cookies in the category "Other. |

| cookielawinfo-checkbox-performance | 11 months | This cookie is set by GDPR Cookie Consent plugin. The cookie is used to store the user consent for the cookies in the category "Performance". |

| viewed_cookie_policy | 11 months | The cookie is set by the GDPR Cookie Consent plugin and is used to store whether or not user has consented to the use of cookies. It does not store any personal data. |